An article by our researchers, titled “Cross-Topic Sentiment Analysis of Wikipedia Articles: A Comparative Study of AI Models“, has been published. The study focuses on one of Wikipedia’s core principles: the neutral point of view (NPOV), which requires information to be presented impartially. The publication demonstrates how modern AI methods can support the analysis of information quality on a global scale, while also revealing the complexity and challenges behind the concept of “neutrality”.

Assessing the neutrality of a text is not simply a matter of detecting positive or negative words. Encyclopedic articles are naturally long and complex, and they may address controversial topics in which subtle differences in language can indicate bias. In addition, different domains, such as politics, history, or the sciences, are characterized by different narrative styles, which makes it difficult to apply a single universal model.

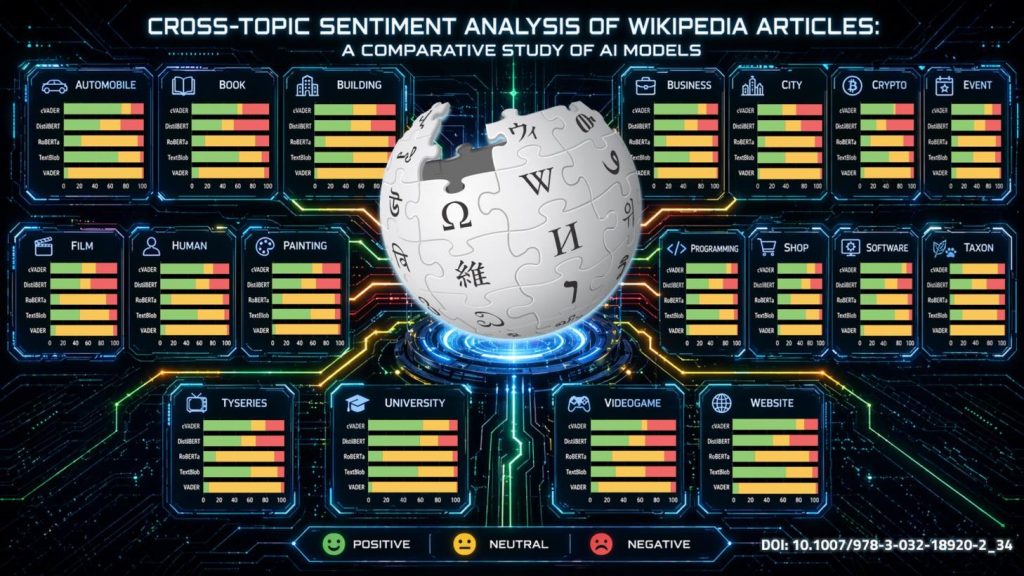

As part of the study, various sentiment analysis models were used: dictionary-based models, namely TextBlob and VADER, as well as models based on transformer architecture, including RoBERTa and DistilBERT. The analysis covered approximately seven million articles from the English-language Wikipedia. Text fragments were first extracted from the articles, after which the articles were classified by topic and assigned to specific quality ratings. The results showed that the sentiment of Wikipedia articles varies depending on the topic, and that the choice of analysis model has a significant impact on the final assessment of content neutrality.

The authors also proposed a practical methodological framework that makes it possible to apply sentiment analysis to long-form texts. The findings of this work may be used, among other things, to automatically monitor the neutrality of content in open knowledge sources, support Wikipedia editors in identifying potentially biased text fragments, and develop tools for assessing the quality of information online.

In addition, a dataset containing sentiment scores assigned by the individual models to approximately seven million Wikipedia articles has been made available on the Hugging Face platform. This resource may serve as a valuable tool for researchers and practitioners interested in natural language processing and bias detection in text. Further details of the analysis can also be found in the supplementary materials.

The research paper was presented at the prestigious IJCAI 2025 conference. The authors of the publication: Włodzimierz Lewoniewski, Milena Stróżyna, Izabela Czumałowska, Aleksandra Wojewoda, Krzysztof Węcel. The publication is available under the DOI: 10.1007/978-3-032-18920-2_34.

This research is supported by the project “OpenFact – artificial intelligence tools for verification of the veracity of information sources and fake news detection” (INFOSTRATEG-I/0035/2021-00), granted within the INFOSTRATEG I program of the National Center for Research and Development, under the topic: Verifying information sources and detecting fake news.